- Blog - img2img AI Tool

- nano banana pro vs nano banana 2: workflow guide

nano banana pro vs nano banana 2: workflow guide

nano banana pro vs nano banana 2: what changed and why teams are re-testing

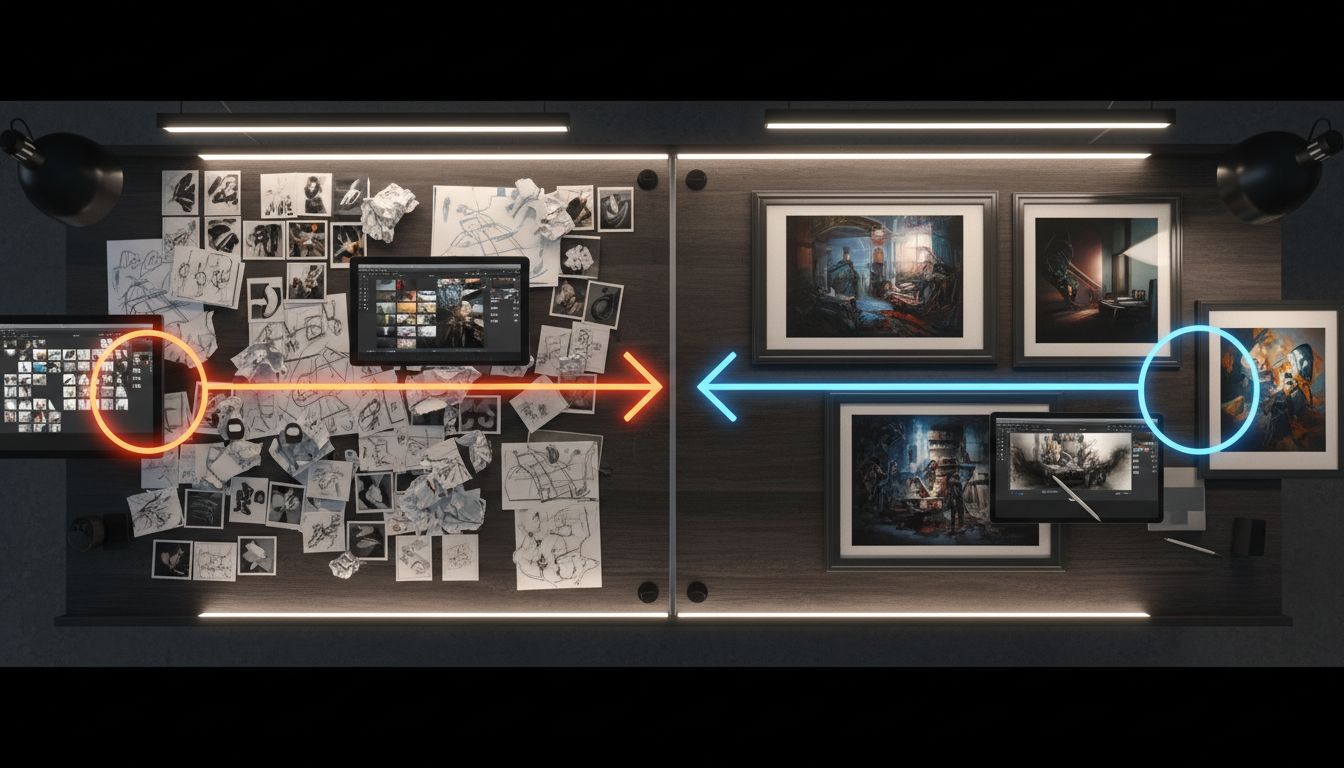

The latest wave of model updates made the nano banana 2 vs pro decision more operational than ideological. If you are comparing nano banana pro vs nano banana 2 only by one pretty output, you will miss the real trade-off: routing work by speed, complexity, and revision risk.

As of February 26, 2026, Google positioned Nano Banana 2 as a high-efficiency image generation path with Pro-like capabilities at Flash-class speed. In parallel, current Gemini model docs describe Nano Banana Pro as the professional image tier for context-heavy, instruction-dense production work. That is why nano banana 2 vs pro now feels less like "pick one winner" and more like "design a two-lane pipeline."

For most production teams, nano banana 2 vs pro decisions should be made at the task level:

- Drafting, exploration, and batch ideation usually favor nano banana 2.

- Final campaign assets, stricter instruction fidelity, and complex scene logic often favor nano banana pro.

nano banana 2 vs pro in the current Gemini lineup

When teams discuss nano banana 2 vs pro, naming confusion still causes bad decisions. The practical mapping is straightforward:

- nano banana 2 aligns with the Gemini 3.1 Flash Image Preview lane (speed, volume, balanced quality).

- nano banana pro aligns with the Gemini 3 Pro Image Preview lane (professional outputs, harder instruction handling).

That mapping matters because the nano banana 2 vs pro choice is also a Gemini 3 Pro alignment choice. If your prompt is simple and throughput-heavy, nano banana 2 is often enough. If your prompt stacks constraints, scene relationships, and brand-critical details, nano banana pro and Gemini 3 Pro style reasoning usually reduce downstream fixes.

What this means for latency, quality, and editing stability

In real teams, nano banana 2 vs pro breaks down into three measurable dimensions:

- Time-to-first-usable-image.

- Time-to-approved-final-image.

- Number of human correction loops.

Nano banana 2 can win the first metric. Nano banana pro often wins the second and third for difficult briefs. If you track only generation speed, your nano banana 2 vs pro policy will skew wrong.

Why Gemini 3 Pro appears in this comparison

Gemini 3 Pro is relevant because many image tasks fail due to reasoning, not style. Multi-object constraints, placement logic, and preserving intent across edits are reasoning problems. In those cases, nano banana pro inherits the strongest benefits from the Gemini 3 Pro tier, while nano banana 2 focuses on high-efficiency throughput.

Direct comparison: nano banana 2 vs pro for production decisions

| Decision area | nano banana 2 | nano banana pro |

|---|---|---|

| Core profile | High-efficiency, speed-first image generation and editing | State-of-the-art contextual image generation for professional output |

| Typical use | High-volume ideation, rapid variant testing, fast edits | Complex instructions, high-stakes assets, stricter creative control |

| Strength in a pipeline | Draft lane and iteration lane | Finalization lane and approval lane |

| Latency posture | Optimized for speed and volume | Slower per run, usually fewer correction rounds on hard briefs |

| Constraint-heavy prompts | Good for mainstream cases | Better when prompts carry layered intent and detailed constraints |

| Team impact | Protects throughput during peak demand | Protects review quality for hero assets |

| Best deployment model | Default model for broad generation | Escalation model behind clear gates |

The reason nano banana 2 vs pro keeps coming up in community threads is simple: both can look close on easy prompts. The gap tends to open when prompts demand stricter instruction following.

Two mini-case scenarios for nano banana 2 vs pro

Scenario 1: marketplace catalog team

A retail catalog team must ship 1,500 localized visuals weekly. They run nano banana 2 for concept expansion, region variants, and fast edit loops. Only shortlisted assets with dense composition requirements are escalated to nano banana pro.

Outcome: the team keeps deadline reliability while reducing premium-model usage. In this nano banana 2 vs pro setup, speed protects operations and Pro protects final quality where it matters.

Scenario 2: B2B launch campaign with technical visuals

A product marketing team creates launch assets with strict object placement and dense semantic requirements. They test concepts in nano banana 2, then move final candidates to nano banana pro for polishing and approval.

Outcome: generation latency rises per image, but approval time drops because outputs need fewer manual corrections. Here, the nano banana 2 vs pro decision is driven by review risk, and Gemini 3 Pro aligned behavior becomes a practical advantage.

Actionable checklist: how to choose nano banana 2 vs pro in 7 steps

1) classify business risk before generation

Tag each request as exploration, standard production, or brand-critical. Your nano banana 2 vs pro routing starts here.

2) score prompt complexity

Use a quick 1-5 score for constraint density, reference fidelity needs, and scene logic depth. High scores should route to nano banana pro, especially when you expect Gemini 3 Pro level reasoning.

3) run a blind A/B pack

Test at least 20 matched prompts in both lanes. Evaluate instruction adherence, visual consistency, and rework time.

4) measure approved-asset cost, not raw generation cost

Nano banana 2 vs pro should be judged by cost per approved deliverable, not cost per single output.

5) reserve Pro for defined trigger conditions

Create hard triggers: hero creative, complex text-and-image intent, legal-sensitive visuals, or high-traffic campaign surfaces.

6) document escalation rules in your design ops guide

If escalation is informal, teams overuse Pro under stress. Make nano banana 2 vs pro triggers explicit.

7) recalibrate monthly

Model behavior evolves quickly. Re-run your nano banana 2 vs pro benchmark pack every month.

Common mistakes that break nano banana 2 vs pro evaluations

Using one viral prompt as a universal benchmark

Single examples can be interesting but rarely represent your production mix.

Mixing draft and final metrics into one score

Draft speed and final approval quality are different objectives. Keep them separate.

Ignoring volume spikes

A model policy that works at low volume can fail during campaign weeks.

Treating Gemini 3 Pro references as marketing labels only

For complex work, Gemini 3 Pro tier reasoning can reduce revision loops. Ignoring that can distort your nano banana 2 vs pro policy.

FAQs: nano banana pro vs nano banana 2

Is nano banana 2 replacing nano banana pro?

Not in a practical production sense. Nano banana 2 is the high-efficiency default for many tasks, while nano banana pro remains the stronger lane for complex or professional-grade outputs.

Is nano banana 2 vs pro mainly a speed vs quality choice?

Partly, but not only. It is also a revision-risk and instruction-fidelity choice. A slower run can still be faster to approval.

How does Gemini 3 Pro fit into nano banana 2 vs pro?

Gemini 3 Pro is the reasoning-heavy tier associated with the Pro image lane. In difficult, multi-constraint prompts, that reasoning depth often improves final reliability.

When should a startup use nano banana pro?

Use nano banana 2 as default, then escalate to nano banana pro for revenue-critical visuals, launch assets, or prompts that repeatedly fail in the fast lane.

What is the fastest way to set a policy for nano banana 2 vs pro?

Create a fixed prompt pack, blind-review outputs, track time-to-approved-asset, and set routing thresholds from that data.

Final recommendation

A durable nano banana 2 vs pro strategy is a routing system, not a one-time model preference. Keep nano banana 2 as your throughput engine, keep nano banana pro as your precision lane, and tie Gemini 3 Pro heavy requests to measurable escalation triggers.

If you want to test your own workflow immediately, run your prompt pack with nano banana 2 vs pro and lock your team rules based on approved-output metrics.